Why Traditional SEO Fails in the AI Answers: Tracking Citations and Sentiment in LLMs

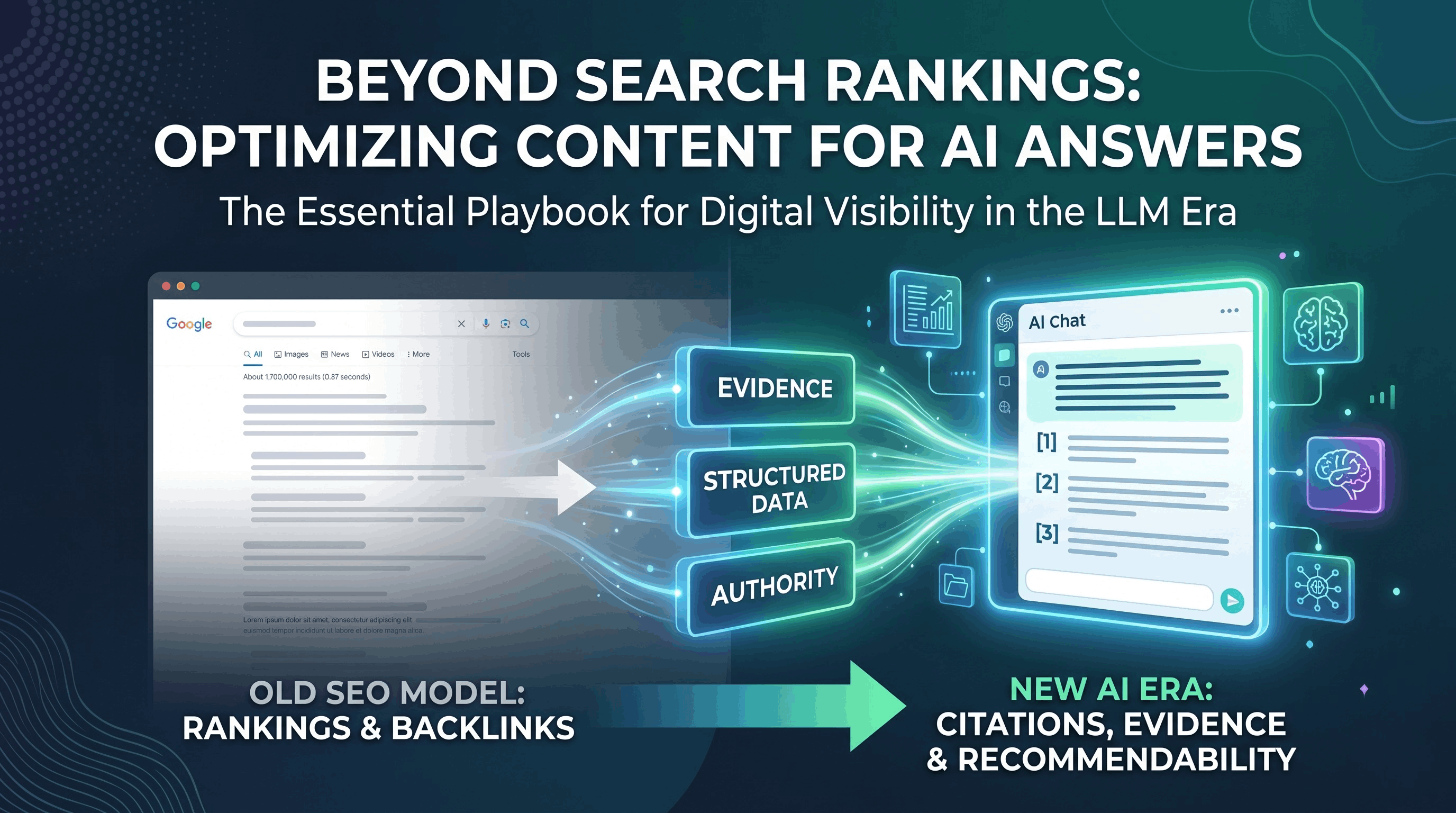

AI assistants now deliver direct answers that cite sources and surface evidence; ranking #1 on search engines no longer guarantees being quoted. To win AI-era visibility you must optimize for citations, evidence grounding, stance, and content recommendability — not just organic rank or backlinks. This means structuring pages for extraction, increasing inline citations, and proving content is authoritative and recommendable to LLMs.

The Moment SEO Quietly Changed

For years, SEO followed a predictable pattern.

You researched keywords, optimized a page, built backlinks—and if you did it well, you ranked. And if you ranked, you got traffic.

Simple.

But something subtle has shifted.

Today, you can still do everything “right”—

rank #1, have better content, stronger backlinks—and still watch your traffic plateau or even decline.

Not because your SEO is failing.

But because search itself has changed.

Users aren’t just searching anymore.

They’re asking.

And increasingly, they’re asking AI.

The New Reality: Answers Are Replacing Results

Think about how you search today.

When you open ChatGPT, Perplexity, or Google’s AI-powered results, you don’t see a list of links first.

You see:

A direct answer

A summarized explanation

A synthesized response pulled from multiple sources

In many cases, that answer is enough.

You don’t click anything.

You don’t compare pages.

You move on.

This is the shift most SEOs underestimate:

Search is no longer just about finding information.

It’s about delivering conclusions instantly.

And that fundamentally changes what visibility means.

TL;DR: What’s Actually Changing

Ranking on Google no longer guarantees visibility

AI systems choose sources—not just positions

Users are clicking less, but still consuming content

Visibility is shifting from pages → answers

The goal is no longer just traffic—it’s inclusion in AI outputs

Why Traditional SEO Feels Like It’s Failing

Let’s be clear: SEO itself isn’t broken.

But the model it was built on is.

Traditional SEO assumes:

Users search using keywords

They browse results

They click links

They read content

That journey is now being compressed into a single step:

👉 Ask → Get answer → Leave

And if your content isn’t part of that answer, your ranking doesn’t matter.

Where Traditional SEO Starts to Break Down

1. Rankings Don’t Equal Visibility Anymore

There was a time when ranking #1 meant dominance.

Now, it simply means:

👉 You’re one of many potential sources

AI systems don’t just take the top result.

They evaluate multiple sources and extract what they need.

That means:

You can rank highly and still be ignored

A lower-ranking page can be chosen instead

Because AI is optimizing for:

Clarity

Relevance

Extractability

Not just position.

2. The Click Is No Longer Guaranteed

This is where most businesses feel the impact.

Traffic declines—even when rankings hold steady.

Why?

Because the answer is already visible.

Users don’t need to visit your page to:

Understand a concept

Compare options

Make a decision

A subtle but critical shift:

Your content can influence decisions

without ever receiving a click

3. Content Is Judged Differently

Traditional SEO rewards:

Keyword usage

Backlink quantity

Technical optimization

AI systems evaluate something deeper:

How clearly ideas are expressed

Whether information can be extracted cleanly

How well a topic is covered in context

Whether the content aligns with known entities

In other words:

It’s not about how optimized your content is.

It’s about how usable it is.

4. Authority Has Expanded Beyond Backlinks

Backlinks still matter—but they’re no longer enough to signal authority.

AI systems look for:

Repeated mentions across the web

Consistent topical expertise

Presence in multiple contexts

Alignment with known entities

This creates a new dynamic:

👉 A brand that is frequently mentioned may outperform one that is simply well-linked.

Introducing the AI Visibility Framework

To understand what replaces traditional SEO thinking, you need a new model.

The AI Visibility Stack

Layer 1: Rankings (Discovery)

This is still your entry point. It helps your content get found and indexed.

Layer 2: Citations (Inclusion)

This determines whether your content is selected and used inside AI-generated answers.

Layer 3: Mentions (Authority)

This builds trust by showing your brand is recognized across multiple sources.

The most important shift to understand:

Ranking gets you indexed.

Citations get you included.

Mentions get you trusted.

How AI Actually Decides What to Include

AI systems are not just scraping—they’re evaluating.

They prioritize content that is:

Structured for Extraction

Content that is easy to break down:

Clear headings

Defined sections

Direct answers

If a key insight is buried in a long paragraph, it’s less likely to be used.

Contextually Complete

AI prefers content that doesn’t just answer a question—but fully supports it.

This includes:

Related subtopics

Supporting ideas

Clear relationships between concepts

Consistently Referenced

If multiple sources point to the same brand or idea, it reinforces credibility.

This is how AI builds confidence in what it presents.

Easy to Synthesize

Content with:

High clarity

Minimal fluff

Strong signal density

…is far more likely to be included.

A Simple Example That Explains Everything

Imagine two articles on the same topic.

Article A:

Ranks #1

Long, detailed, but loosely structured

Minimal brand presence elsewhere

Article B:

Ranks #3

Clearly structured

Direct answers

Brand mentioned across multiple sources

Now a user asks an AI platform a question.

Which one gets used?

👉 In most cases, Article B

Because it’s:

Easier to extract

Easier to trust

Easier to synthesize

The Real Shift: From Traffic to Influence

Traditional SEO was built around traffic.

More rankings → more clicks → more conversions

But AI search introduces a new layer:

👉 Influence without interaction

Your content can:

Shape decisions

Guide thinking

Build authority

…without ever being visited.

That changes the goal completely:

Then | Now |

|---|---|

Rank higher | Be selected |

Get clicks | Be cited |

Drive traffic | Build visibility |

Earn backlinks | Earn mentions |

What Actually Works Now (Practical Strategy)

This is where most content falls short.

Understanding the shift is one thing.

Adapting to it is another.

1. Write for Extraction

Your content should make it easy for AI to pull insights.

That means:

Clear headings

Direct answers early

Concise paragraphs

Every section should feel independently useful.

2. Build “Citable Moments”

Instead of long explanations, include:

Strong statements

Clear definitions

Standalone insights

Ask yourself:

👉 “If this paragraph is shown alone, does it still make sense?”

3. Go Deep, Not Just Wide

Cover topics thoroughly:

Include related concepts

Address sub-questions

Connect ideas clearly

This improves:

Semantic relevance

Topic authority

Inclusion probability

4. Expand Beyond Your Website

AI doesn’t just evaluate your site—it evaluates your presence.

You need visibility across:

Industry blogs

Communities

Discussions

Third-party content

5. Reduce Content Noise

More words don’t mean more value.

Focus on:

Clarity

Precision

Signal strength

Cut anything that doesn’t add meaning.

Common Mistakes That Hold Content Back

Writing long but unstructured articles

Repeating the same idea in different words

Focusing only on keywords

Ignoring brand presence outside your site

Treating backlinks as the only authority signal

Where This Is Heading

This shift is still early—but it’s accelerating.

AI will continue to:

Replace traditional search journeys

Reduce reliance on links

Increase importance of trusted sources

The brands that adapt early will:

Own more visibility

Shape more decisions

Build stronger authority

Final Thought

SEO isn’t disappearing.

But it’s no longer enough to be found.

You need to be selected.

Because in AI search, visibility doesn’t go to the page that ranks highest.

It goes to the content that is:

Clear enough to extract

Strong enough to trust

Present enough to validate

Want to Know If You’re Actually Visible?

Most tools still track rankings.

But rankings don’t tell you:

If AI is using your content

Where your brand appears

How often you’re being cited

If you want to understand your real visibility:

👉 Authority Radar helps you track

AI citations

Brand mentions

Competitive visibility in AI answers