Why ChatGPT, Gemini, and Perplexity Cite Different Brands (And What It Means for Your SEO Strategy)

A Brand Can Rank #1 — And Still Be Invisible in AI Answers

We ran a simple test across AI platforms.

Same brand.

Same queries.

Same competitors.

And yet—

In one platform, the brand appeared as a cited source

In another, it was mentioned but never cited

In another, third-party websites dominated almost entirely

This wasn’t a hypothesis.

It came directly from campaign data.

A Quick Note on This Analysis

AI platforms do not publicly disclose how they select or prioritize sources.

So instead of making assumptions, this analysis is based on:

A real campaign for HubSpot (CRM)

Identical query sets across platforms

Observed outputs from tools tracking:

Mentions

Citations

Source distribution

Share of voice

👉 These are observed patterns, not fixed rules.

The Campaign Setup

We analyzed a CRM-focused campaign tracking queries such as:

Best CRM with marketing automation

Best CRM with WhatsApp integration

Best CRM with email automation

Across platforms including:

Perplexity

Grok (xAI)

Each platform was evaluated on:

Brand mentions

Brand citations

Source distribution

Competitor visibility

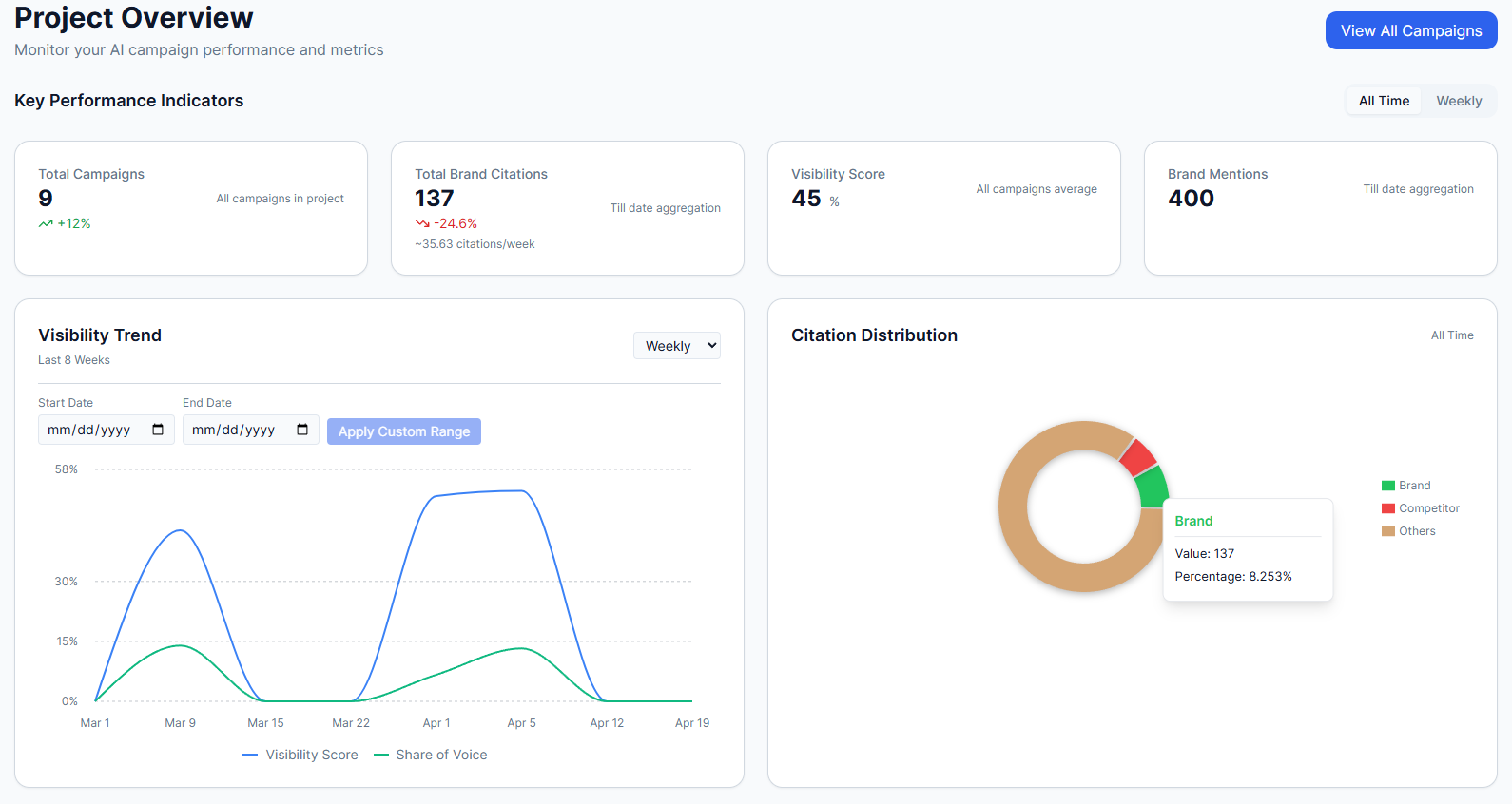

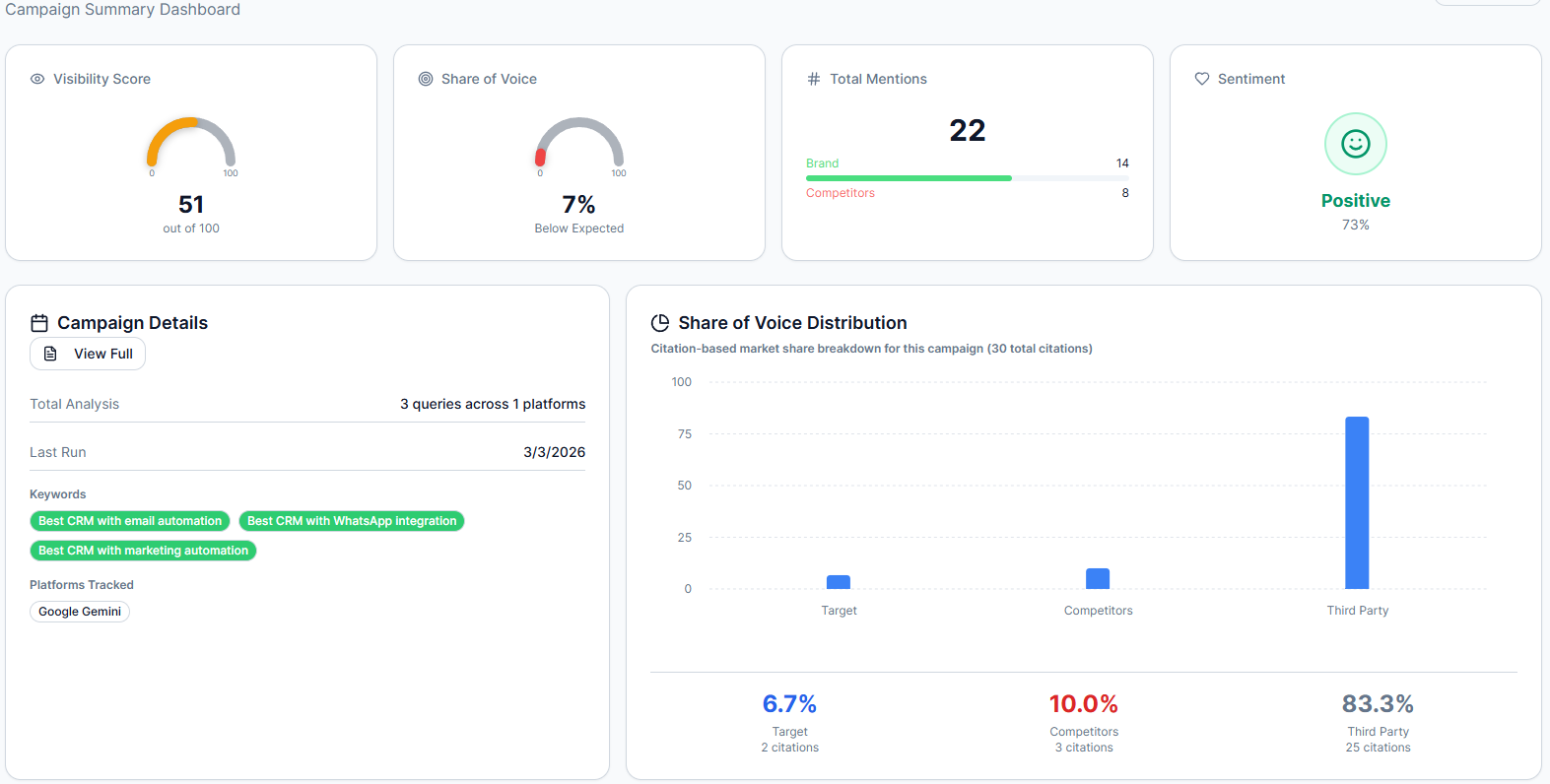

Caption: Campaign overview showing citation distribution and visibility for CRM queries on Perplexity

What We Observed Across Platforms

1. Same Queries, Different Citation Outcomes

For the same set of CRM queries:

In Perplexity:

The target brand (HubSpot) appeared as a cited source

Citations included a mix of:

Brand-owned content

Third-party comparison sites

Visibility was distributed across multiple sources

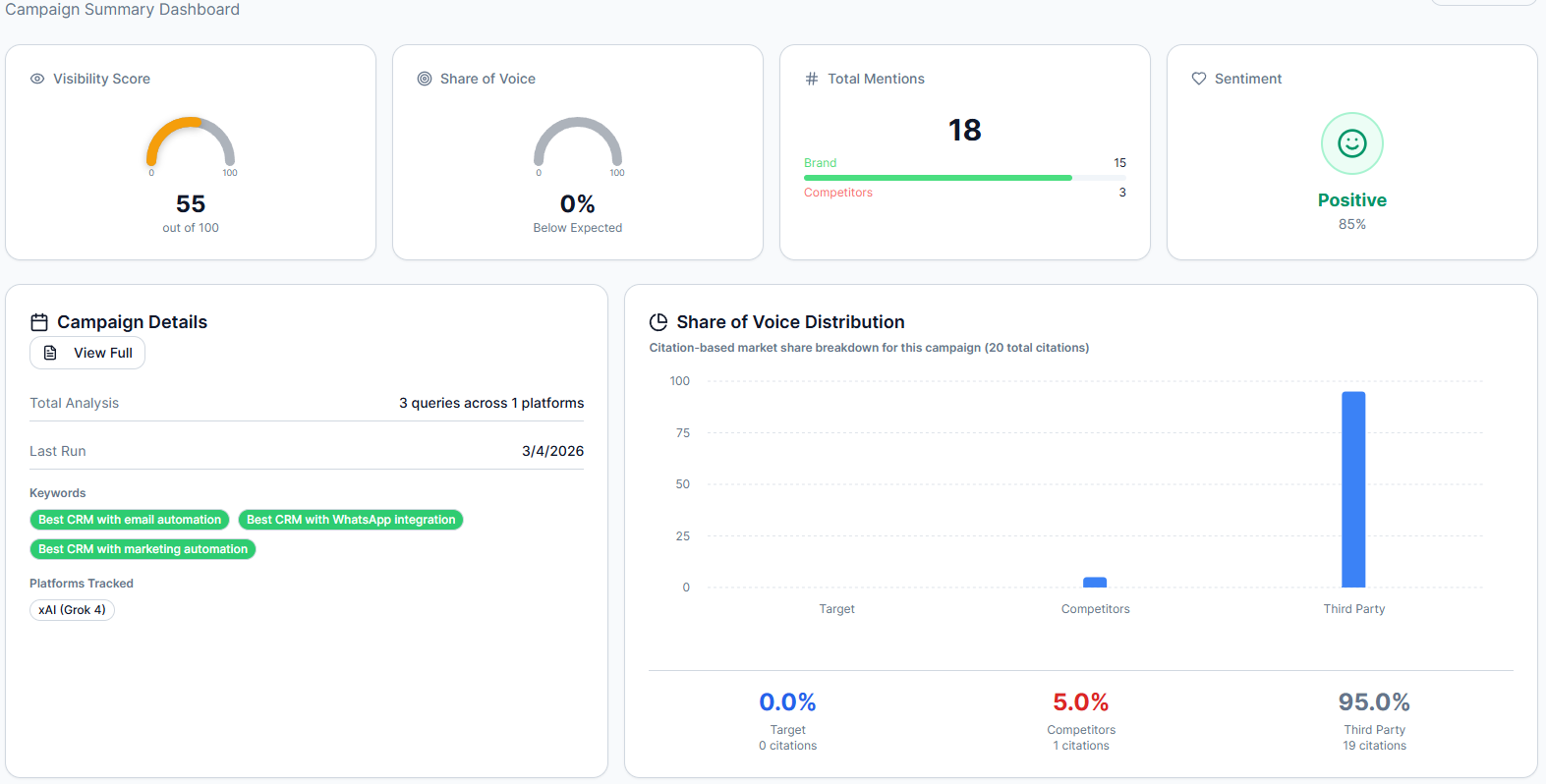

In Grok (xAI):

The same brand (HubSpot) received:

15 mentions

0 citations

Approximately 95% of citations came from third-party sources

Caption: Same queries on Grok (xAI): brand mentioned but not cited; third-party sources dominate

What this suggests

For identical queries, the brand contributed to the response in one platform—but not to the source layer in another.

2. Mentions vs Citations: A Critical Gap

In the Grok dataset:

Brand mentions: 15

Brand citations: 0

Why this matters

This highlights a key distinction:

A brand can be part of the generated answer, but not part of the sources used to support it.

In practical terms:

Mentions → awareness

Citations → authority, attribution, and likely traffic

3. Third-Party Sources Can Dominate Visibility

In the Grok campaign:

~95% of citations came from third-party sources

These included:

Review platforms

Comparison blogs

Industry content sites

What this suggests

In some AI-generated responses, visibility may depend heavily on:

👉 External ecosystem coverage—not just your own website

4. Platform Behavior Is Not Consistent

Comparing the same campaign across platforms:

Perplexity included the brand within cited sources

Grok relied heavily on third-party citations

The same queries produced different attribution patterns

What this suggests

Different platforms may construct answers differently—leading to variation in:

Which sources are cited

How brands are represented

How much weight is given to third-party content

Interpreting These Differences (Carefully)

We are not claiming that any platform follows a fixed rule.

However, based on repeated observations, differences in citation behavior may relate to:

How easily content can be summarized

How structured or comprehensive it is

Whether external references support content

The role of third-party validation in the answer

A Closer Look: Same Brand, Same Queries — Different Outcomes

For the CRM campaign:

Queries analyzed:

Best CRM with marketing automation

Best CRM with WhatsApp integration

Best CRM with email automation

Observed outcome:

In Perplexity:

HubSpot was included in cited sources

Appeared alongside comparison content

Contributed directly to the answer’s references

In Grok:

HubSpot appeared in generated text (15 mentions)

But did not appear in citations

Third-party sources dominated the reference layer

Interpretation

The difference was not in:

Query intent

Brand relevance

Topic coverage

It was in:

👉 How each platform selected and displayed sources

What This Means for SEO Teams

1. Ranking Does Not Guarantee Inclusion

A page can perform well in traditional search and still:

Not be cited

Not be attributed

Not influence AI-generated answers

2. AI Visibility Is Multi-Layered

From the campaign data, visibility operates across layers:

Mention layer → brand appears in text

Citation layer → brand is used as a source

Source ecosystem → third-party influence

3. Your Content Is Only Part of the Equation

With third-party citations dominating in some cases:

👉 Visibility may depend on:

Review coverage

Listicles

Industry mentions

External validation

4. Multi-Model Gaps Create Opportunity

Most brands are likely to be:

Strong in one platform

Underrepresented in another

Without tracking this, these gaps remain invisible.

A Practical Framework (Based on Observations)

Step 1: Audit Across Platforms

Track:

Mentions

Citations

Source distribution

Across multiple AI systems.

Step 2: Identify Gaps

Look for patterns like:

High mentions, low citations

Strong presence in one platform, weak in another

Third-party dominance replacing your brand

Step 3: Adjust Content Strategy

Based on observed patterns:

Improve clarity and extractability

Strengthen structure and depth

Increase presence in third-party ecosystems

Build stronger evidence and credibility

Step 4: Monitor Continuously

These patterns are not static.

They evolve with:

Model updates

Content ecosystem changes

Query trends

Why This Matters Commercially

If your brand:

Is not cited

Is replaced by competitors

Or is overshadowed by third-party sources

Then:

👉 You lose visibility before users even visit a website

The Bigger Shift: From Rankings to Representation

Traditional SEO:

Where do we rank?

AI-driven discovery:

How are we represented—and cited—across platforms?

Final Takeaway

From this campaign, we observed:

Identical queries producing different citation outcomes

Mentions without citations

Strong third-party dominance in some platforms

Clear variation across AI systems

We are not defining rules.

But we are seeing a pattern:

AI visibility is fragmented—and model-dependent.

Want to See Your Own Data?

Most teams track rankings.

Few track:

Citations

Mentions

Platform-level visibility

Source distribution

Authority Radar helps you:

Track performance across AI platforms

Identify where you’re cited (and where you’re not)

Analyze competitor presence

Act on real data

👉 Start your first campaign and understand your true AI visibility.