AI Citation Gaps: How Brands Can Find, Measure, and Close Visibility Gaps in AI Search

You may rank on the first page of Google. Your traffic numbers look fine. But in the answers your buyers now trust most — from ChatGPT, Perplexity, Gemini, and AI-powered search — your brand is nowhere to be found. That's the new problem. And most teams don't know they have it yet.

"Your brand may rank on Google and still be missing from the answers your buyers now trust most. That gap — between traditional visibility and AI answer visibility — is where market share quietly gets lost."

Search behavior has shifted faster than most marketing teams have adjusted to. A growing share of buyers now open ChatGPT, Perplexity, or Gemini and ask direct questions: What's the best tool for X? Compare A and B. What do experts recommend? These users don't browse a results page. They read a generated answer — and if your brand isn't cited, mentioned, or associated with the right topics, you're invisible before the conversation even begins.

This creates a structural problem that traditional SEO metrics won't surface. You can have strong domain authority, healthy rankings, and solid organic traffic — and still be systematically missing from the answers AI systems generate for your most important buying prompts. That gap is what we call an AI citation gap.

This guide explains what AI citation gaps are, why they happen, how to diagnose them, and — critically — how to close them before competitors do.

What Is an AI Citation Gap?

An AI citation gap is the difference between:

The topics and prompts where your brand should appear — based on your market position, product category, and buyer intent — and the answers where AI platforms actually mention or cite you.

In practice, it shows up in a few recognizable ways:

A buyer asks "What are the best tools for [your category]?" — competitors appear, you don't.

Your brand is mentioned in passing but not cited; no link, no reference, no attribution.

You appear for branded prompts (people who already know your name) but not for category prompts (people discovering their options).

AI answers cite third-party review sites or editorial roundups about your category — but those sources either exclude you or position you weakly.

Competitors "own" conceptual territory — certain features, use cases, outcomes — while your positioning is absent or vague in AI-generated answers.

Why this matters now

In AI search, presence influences awareness, citations build trust, and repeated mentions create category association. Absence doesn't just lose clicks — it removes you from the shortlist before a buyer even begins to evaluate options.

Why Brands Are Losing Visibility in AI Answers

The causes of AI citation gaps are rarely about a single content failure. They're usually the result of several compounding weaknesses — and understanding them is the first step to fixing them.

1. Their content is present, but not citation-worthy

AI systems tend to surface content that is clear, comprehensive, well-structured, evidence-backed, and closely aligned to the specific prompt being asked. Publishing regularly isn't enough. Content that is too broad, too shallow, or not directly answerable to buyer questions rarely earns a citation — even if it ranks.

2. Their entity footprint is weak

AI systems build associations between brands and concepts. If your brand is not consistently linked — across your own content, third-party sources, review platforms, and community discussions — to the right category terms, use cases, comparisons, and solution attributes, it becomes less likely to surface when those topics are queried. This is the entity problem. Strong SEO without strong entity coverage is increasingly insufficient.

3. Competitors have stronger third-party authority

AI systems don't rely only on first-party content. They draw from review sites, editorial roundups, comparison pages, analyst commentary, community discussions, and thought leadership. If competitors are reinforced by a richer ecosystem of authoritative third-party references — and you're not — they win citations regardless of your content quality.

4. The brand is not being monitored at the prompt level

Most marketing teams still track rankings, traffic, and backlinks. Very few track prompt-level presence: which AI answers mention them, how often, alongside which competitors, and with what sentiment. Without prompt-level visibility data, teams can't see the gap — and can't close it.

5. Content strategy is built for search engines, not answer engines

Traditional SEO prioritizes discoverability through keywords and links. AI answer visibility requires something additional: direct answerability, semantic topic coverage, entity reinforcement, trustworthy citation patterns, and relevance to the way buyers naturally ask questions. These aren't the same discipline — and treating them as identical is a strategic mistake.

How AI Platforms Decide What to Mention or Cite

You don't need to understand the technical architecture of large language models to improve your AI visibility. But you do need to understand how AI answers are shaped — because the signals are different from what SEO has trained most teams to optimize for.

AI-generated answers are influenced by a combination of factors:

Topical relevance

Entity clarity

Source authority

Citation availability

Answer structure

Content freshness

Web-wide consensus

Trusted corroboration

The brands that appear most consistently in AI answers tend to score well across all of these — not just one or two. That's why a single strong blog post rarely moves the needle, but a coordinated presence across owned content, third-party references, and structured information does.

Mentions and citations are not the same thing

It's worth being precise here. A mention means your brand appears in the generated answer. A citation means a specific source is linked, referenced, or attributed. The strongest position is: a positive mention, supported by a clear citation from an authoritative source, repeated across multiple prompts and platforms. Each element compounds the other.

Rankings alone are no longer sufficient

A brand can rank on the first page, attract meaningful traffic, and still be systematically underrepresented in AI-generated recommendations. This is the critical shift. AI answers synthesize across many sources and apply their own relevance judgments — a judgment your ranking position doesn't directly influence. Adapting to this reality requires a different set of inputs, metrics, and strategies.

The 5 Types of AI Citation Gaps Brands Should Audit

Not all AI citation gaps are the same problem. Diagnosing which type of gap you have — and where it's most acute — determines where to focus your effort. Here are the five categories that matter most.

Gap Type 01

Prompt Coverage Gap

You appear for too few of the prompts your buyers actually ask — particularly category queries, comparison queries, alternatives prompts, and problem/solution queries.

Gap Type 02

Mention Gap

Competitors are named more often than you across the same answer set. Even when you appear, you appear less frequently and with less emphasis.

Gap Type 03

Citation Gap

Your brand is mentioned in AI answers, but the cited sources belong to competitors or third parties. The credibility signal flows to them, not to you.

Gap Type 04

Sentiment / Positioning Gap

You are described less favorably, less clearly, or with weaker differentiation than competitors. Vague or inconsistent positioning in source material compounds this.

Gap Type 05

Entity / Topic Gap

The broader web does not strongly associate your brand with the subtopics, features, use cases, or problems that matter most to your buyers. You may own the homepage but not the conversation around the category.

Gap type reference

Gap Type | What It Means | Symptom Example | Business Risk |

|---|---|---|---|

Prompt Coverage | Missing from key buyer prompts | Not seen in "best [category] tools" answers | Lost discovery |

Mention Gap | Competitors named more often | Rival appears in answers repeatedly; you rarely do | Lower consideration |

Citation Gap | Weak source attribution | Third-party pages cited instead of yours | Lower trust |

Sentiment Gap | Poor or vague framing in answers | Weak or unclear recommendation context | Lower conversion |

Entity Gap | Weak topic association | Absent from use-case and feature-level prompts | Category irrelevance |

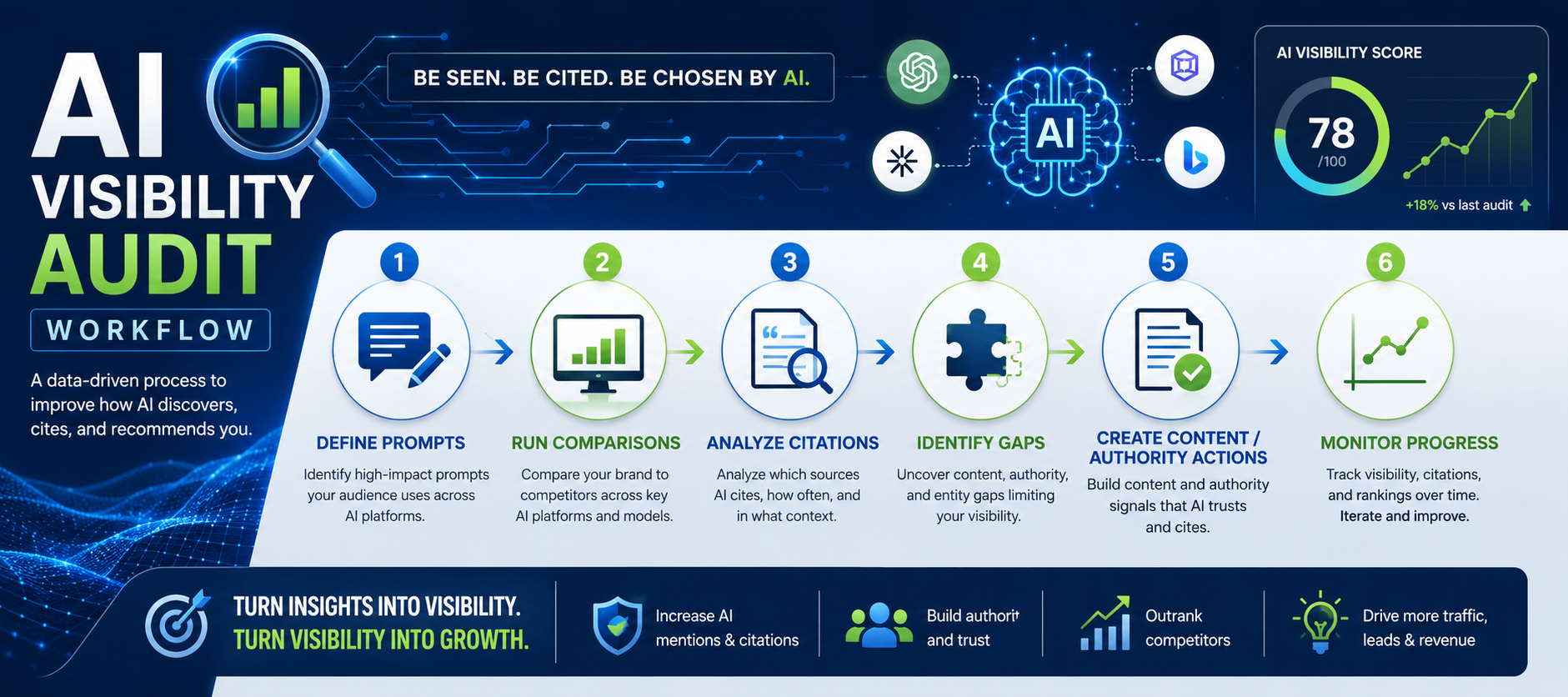

How to Audit Your Brand's AI Visibility

A meaningful AI visibility audit is not a one-time exercise. But it starts with a structured first pass — building the baseline from which all future measurement is compared.

Build a prompt set that reflects real buyer behavior

Go beyond branded prompts. Map out the full range of queries your buyers actually use: category prompts ("best tools for X"), comparison prompts ("A vs B"), alternatives prompts ("alternatives to [competitor]"), feature/use-case prompts, problem-solving prompts, and industry-specific variations. This prompt set becomes the foundation of your ongoing measurement.

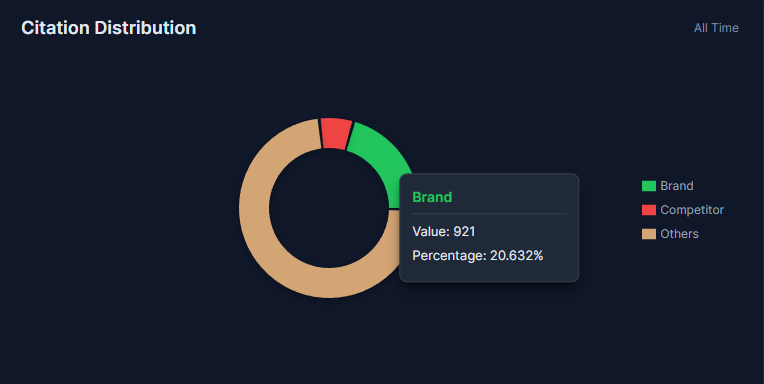

Track which platforms mention you — and where you're absent

Run your prompt set across ChatGPT, Perplexity, Gemini, and any other AI answer environments relevant to your market. Platform behavior differs meaningfully. A brand may appear consistently in Perplexity answers and rarely in ChatGPT, or vice versa. These platform-level differences matter for strategy.

Compare your visibility against competitors

For each prompt, record who is mentioned, how often, in what context, and with what framing. Calculate mention frequency, share of voice, citation frequency, citation source type, sentiment, and answer position. This comparative data is where the most actionable insights emerge.

Review what sources the AI is relying on

Look for patterns in the citations AI systems use. Are competitors being cited through editorial roundups? Software directories? Their own blog? Review platforms? Knowing which source categories drive competitor citations tells you where your authority is weakest — and where to invest.

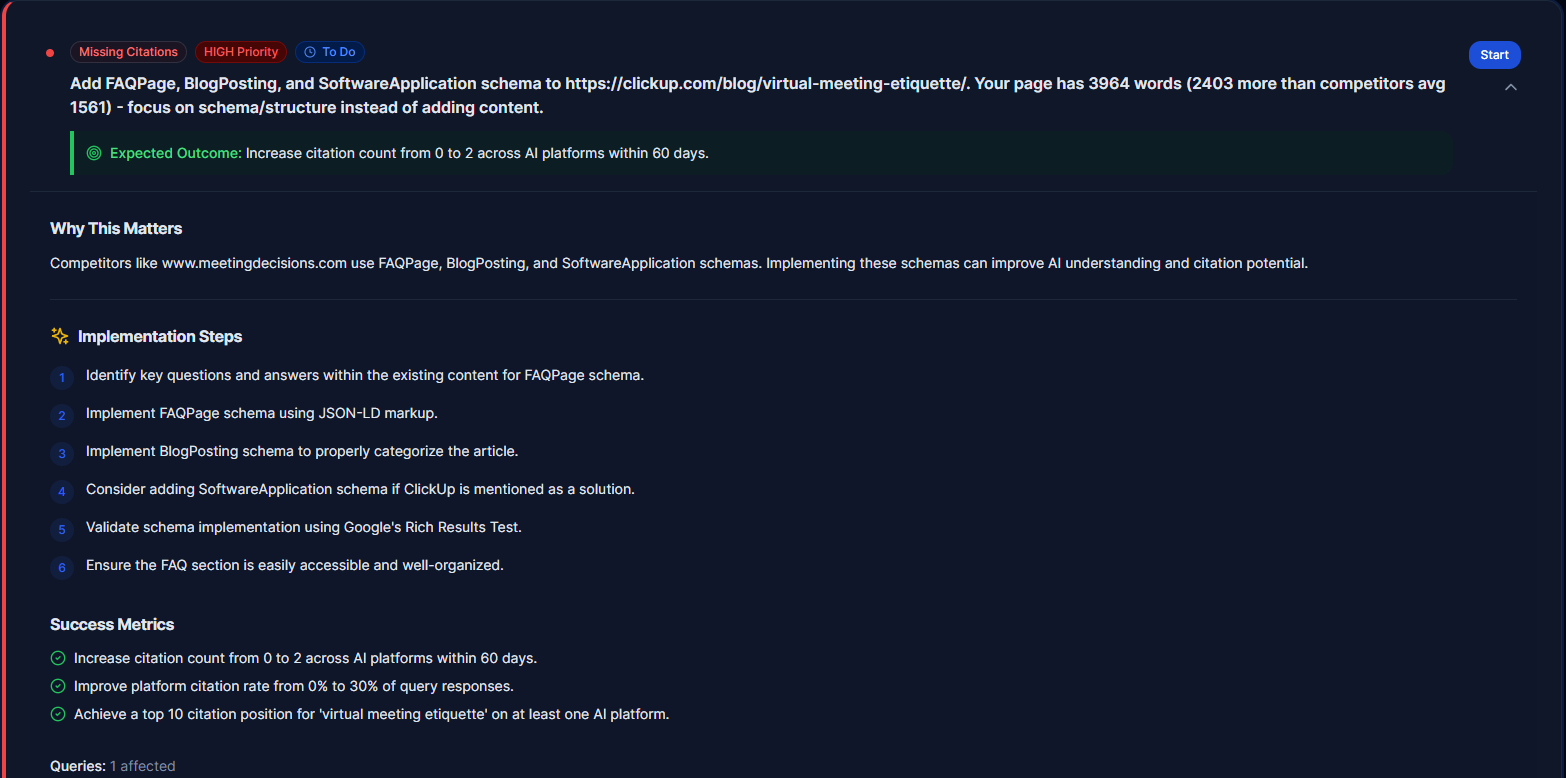

Identify content and authority gaps

Cross-reference what you find against your own content library. Are there high-intent topics with no coverage? Pages that exist but are too shallow? Features or use cases that are well-described on competitor sites but absent from yours? Subtopics where third-party sources don't associate your brand at all?

Re-run and monitor over time

AI answer environments are dynamic. Competitive position shifts as new content is published, new mentions are earned, and AI systems update their synthesis. A baseline audit has value — but ongoing monitoring is what transforms it into a competitive advantage.

How to Close AI Citation Gaps

Closing AI citation gaps requires coordinated effort across content, digital PR, and entity coverage. There is no single lever. The brands that do this well treat it as an operating model, not a campaign.

1. Strengthen citation-worthy content

The pages that earn citations in AI answers share a set of characteristics: they are fact-rich, well-structured, specific, original, and directly aligned to the questions buyers ask. Audit your most important topic pages against these criteria. Prioritize depth over volume — one comprehensive, well-sourced piece outperforms five thin blog posts every time.

2. Expand entity coverage across key themes

Build and reinforce the associations between your brand and the topics that matter: your category, the features you offer, the use cases you serve, the buyer outcomes you enable, and the problems you solve. This means creating content that explicitly addresses these themes — not just hoping they're implied by your product.

3. Improve third-party authority signals

Your owned content alone is not enough. AI systems synthesize from the full web ecosystem — reviews, directories, analyst commentary, editorial roundups, community discussions. Invest in digital PR, expert commentary, quality link earning, inclusion in authoritative comparison pieces, and review platform visibility. These third-party signals are often the difference between appearing and being cited.

4. Create comparison and alternatives content strategically

AI answers frequently synthesize from pages that frame category comparisons: "[Your brand] vs [Competitor]," "Best [category] tools," "[Category] alternatives." These formats are high-value because they directly match how buyers ask questions. Build them — both from your own site and by earning inclusion in third-party versions.

5. Fix messaging clarity

Vague positioning is a visibility problem. If your website, your third-party listings, and your PR coverage all describe your brand differently — or not clearly enough — AI systems may fail to consistently associate you with the right category, use cases, or differentiation. Alignment across all channels matters more than it did in traditional SEO.

6. Monitor continuously — not once

AI citation gaps don't stay fixed. Competitors publish new content. Review sites update their rankings. AI systems evolve. A gap you close today can re-open in six weeks if you're not watching. Build monitoring into your regular operating rhythm — not as a one-off exercise.

The Content Formats Most Likely to Improve AI Visibility

Not all content contributes equally to AI citation presence. Based on how AI systems synthesize answers, certain formats consistently perform better than others.

Format 01

Category-defining pages

Pages that define the category, explain how it works, identify who it's for, and establish evaluation criteria. These earn citations when buyers ask definitional questions.

Format 02

Comparison and alternatives pages

"[Brand] vs [Competitor]", "Best [category] tools", "[Category] alternatives." These map directly to how buyers phrase questions in AI search.

Format 03

Use-case pages

Pages organized by team, workflow, industry, or pain point. These capture the long tail of buyer-specific prompts that category-level pages miss.

Format 04

Data-backed thought leadership

Benchmark reports, trend analyses, original research. AI systems favor citing authoritative original data — and it differentiates you from competitors publishing opinions alone.

Format 05

Glossary and educational pages

Especially valuable in fast-moving categories where AI systems need clear definitional anchors. These pages establish your brand as the authoritative voice on category terminology.

Format 06

Expert-led guides and frameworks

Content that provides structured, named frameworks — like the one in this article — gets cited more consistently than generic advice because it is distinct, referenceable, and attributable.

What Most Teams Get Wrong About AI Visibility

The strategic mistakes are predictable — and most of them come from applying old mental models to a new problem.

✗ Treating AI visibility as a simple extension of rankings - It overlaps with SEO, but it is not the same discipline. Optimizing for rankings without understanding how AI systems synthesize answers leaves the most important new channel unaddressed.

✗ Focusing only on owned content - Third-party authority — reviews, editorial mentions, directory listings, analyst coverage — is a primary driver of AI citation patterns. A brand with great owned content but a weak external footprint will consistently lose to competitors with stronger ecosystems.

✗ Tracking only branded prompts - Buyers who already know your name are not the growth opportunity. The discovery prompts — category queries, alternatives searches, comparison questions — are where new buyers are made. If you're only measuring branded visibility, you're missing the most important data.

✗ Optimizing content without analyzing competitor citation patterns - Your strategy needs to account for where competitors are being cited, not just where you're not. Understanding what's driving their AI visibility tells you which source types and content formats to prioritize.

✗ Running one audit and assuming the picture is stable - AI answer environments change constantly. Competitive citation patterns shift. New content and new mentions move the needle in both directions. A point-in-time snapshot is useful as a baseline — but only continuous monitoring turns it into a real-time advantage.

A Practical AI Visibility Scorecard for Marketing Teams

To move from one-off diagnosis to a repeatable operating model, marketing teams need a consistent measurement framework. Here's a scorecard structure that works across teams of different sizes and sophistication levels.

📍 Prompt Coverage

📣 Mention Frequency

🔗 Citation Frequency

⭐ Citation Quality

📊 Competitive Share of Voice

💬 Sentiment / Positioning

🗂️ Topic / Entity Coverage

📈 Change Over Time

Questions to ask every month

Which prompts are driving competitor visibility that we're missing from?

Where are we mentioned but not cited — and what sources are being cited instead?

Which pages or third-party sources are helping competitors the most?

Which subtopics do we consistently fail to appear in?

What changed after we published new content or earned new mentions?

How does our visibility differ across ChatGPT, Perplexity, and Gemini?

These questions don't need to take hours to answer. But they do need to be asked regularly — with structured data behind them, not guesswork.

Where Authority Radar Fits In

The strategy described in this article is not complicated. But executing it consistently — across multiple platforms, competitors, prompt categories, and time periods — is operationally difficult without the right tooling.

Most teams start with manual prompt testing: running queries in ChatGPT and Perplexity, screenshotting results, comparing notes in a spreadsheet. That process works for a first audit. It doesn't scale. And it produces a snapshot, not an operating model.

Authority Radar is built for the step after that.

It helps teams move from ad hoc prompt testing and scattered observations to structured AI prompt tracking, competitive comparisons, mention and citation monitoring, and consistent reporting. Instead of asking "are we showing up?" once a quarter, teams can track movement, spot competitive shifts, identify emerging gaps, and prioritize action — continuously.

If your team is already investing in SEO, content, and digital PR, the immediate next step is understanding how those efforts actually translate into AI answer presence — and where the gaps still are. That's the visibility layer that most teams haven't built yet. It's also the one that's increasingly difficult to compete without.

Conclusion

AI Search Is Creating a New Visibility Layer — And Most Brands Aren't Playing on It.

The brands that win in AI search won't necessarily be the ones that publish the most content or have the most backlinks. They'll be the ones that understand where they're missing, why competitors are being cited, which authority signals matter, and how to improve visibility systematically.

That requires a different operating model than traditional SEO. It requires prompt-level thinking, entity coverage, third-party authority investment, and continuous monitoring — not just content calendars and keyword targets.

The good news: most competitors haven't built this yet either. The brands that close their AI citation gaps now — before the category calcifies — will be the hardest to displace later.

Ready to find your AI citation gaps?

Start by auditing your current AI visibility, benchmarking top competitors, and identifying where the gaps are largest. Then build a roadmap to close them.

Audit your AI visibility

Benchmark competitors

Identify citation gaps

Build a visibility roadmap

Frequently Asked Questions About AI Citation Gaps

What is an AI citation gap?

An AI citation gap is the difference between the prompts where your brand should appear — based on your market position and category — and the answers where AI platforms actually mention or cite you. It's the gap between the visibility you've earned and the visibility you're actually getting in AI-generated answers.

Why is my brand not showing up in AI answers?

Common causes include weak topic coverage, low third-party authority, unclear or inconsistent brand positioning, shallow content that doesn't directly answer buyer questions, and stronger competitor citation patterns built through a richer external presence. Usually it's a combination of several factors rather than one single issue.

Are AI citations the same as backlinks?

No. Backlinks are a traditional web signal used by search engines to evaluate authority. AI citations refer to the sources AI systems appear to reference or rely on when generating answers. There is meaningful overlap — authoritative sites that earn backlinks often earn citations too — but they are not the same thing and don't respond to the same strategies.

How do I improve brand visibility in ChatGPT or Perplexity?

Improve the depth and directness of your content, expand your entity and topic coverage, strengthen third-party authority signals through digital PR and review platforms, build comparison and use-case pages, and monitor your prompt-level visibility continuously. No single action is sufficient — it requires coordination across content, authority, and measurement.

Is AI visibility different from SEO?

Yes — though it overlaps significantly. Traditional SEO focuses on discoverability through keywords, links, and rankings. AI visibility also depends on how AI systems synthesize sources, identify relevant entities, evaluate authority, and decide what to mention or cite in generated answers. The two disciplines share inputs but require different strategies and different measurement frameworks.

How should marketing teams measure AI visibility?

Track prompt coverage (which queries you appear in), mention frequency, citation frequency and quality, competitive share of voice, sentiment and positioning quality, topic and entity coverage, and how all of these metrics change over time. The goal is a repeatable monthly scorecard, not a one-time audit.

How quickly can AI citation gaps be closed?

It varies. Some improvements — particularly content depth and messaging clarity — can begin shifting AI answer patterns within weeks. Building third-party authority and entity coverage takes longer, typically three to six months for meaningful movement. The most important factor is starting with an accurate diagnosis and monitoring consistently so you can see what's working.